Blog

Unless otherwise stated, content is shared under CC-BY-NC Licence

Calling All Digital File Format Enthusiasts! The Library of Congress Wants Your Feedback on the Recommended Formats Statement

By Kate Murray and Ted Westervelt, Library of Congress

If you are a fan of digital file formats and we know there are lots of you out there, get your thinking cap on and editing pen ready because we want to hear from you! It’s that time of year when The Library of Congress is seeking input on its yearly review and revision of the Recommended Formats Statement.

iPRES2018 - the 15th International Conference on Digital Preservation will be co-hosted by Harvard and MIT!

The following is a guest post by the iPRES2018 Social Media Team. The interview was lead by Michelle Lindlar.

iPRES - the International Conference on Digital Preservation - is without a doubt the biggest and most important conference of the year for everyone involved in digital preservation, curation and long-term data stewardship. The conference series has been bringing together researchers and practitioners from around the world for the past 15 years, with the conference locations alternating between Asia-Pacific, Europe and North America. This September, iPRES will be returning to the US and will be co-hosted by MIT and Harvard. Let’s hear directly from the co-organizers Nance McGovern and Ann Whiteside what we can expect from the iPRES2018.

#DeleteFacebook and User Data Scandal Plastered Across the Headlines. Meanwhile, the National Forum on Ethics and Archiving the Web

National Forum on Ethics and Archiving the Web, New York, 22-24 March 2018

Web archives can serve as witness to crimes, corruption, and abuse; they are powerful advocacy tools; they support community memory around moments of political change, cultural expression, or tragedy. At the same time, they can cause harm and facilitate surveillance and oppression.

Tomorrow I jet off to the big apple to attend the National Forum on Ethics and Archiving the Web hosted by Rhizome, the Documenting the Now project, and partners.

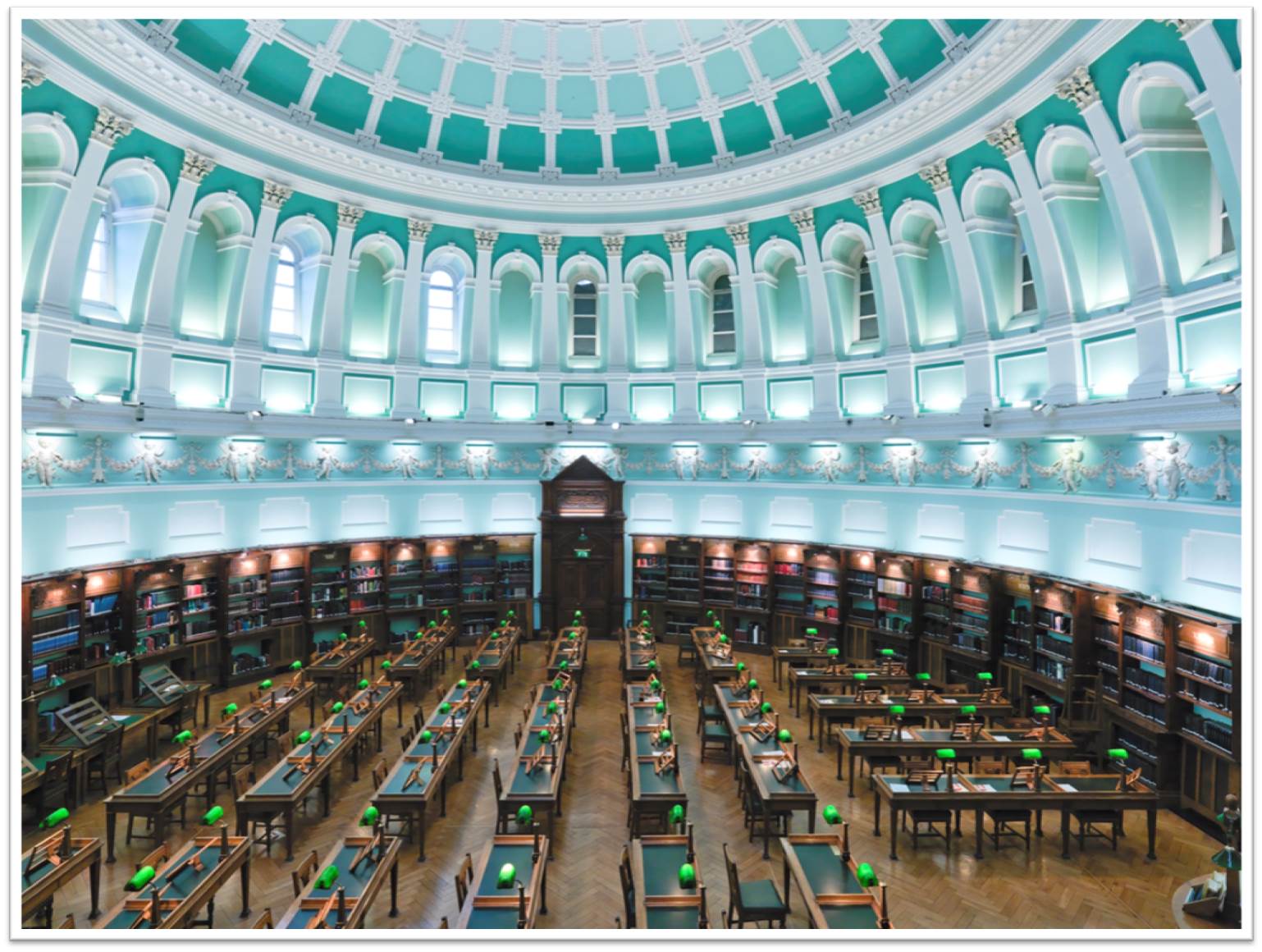

Digital Collections at the National Library of Ireland

by Jenny Doyle, Joanna Finegan, Della Keating and Maria Ryan, Digital Collections Department, National Library of Ireland.

Advocating for advocacy

DPC members might remember some work we (on the Advocacy & Communications Sub-Committee) started a while ago to create an ‘Executive Briefing Pack’…?

Born out of calls from the Coalition’s membership for more resources to support internal advocacy, the Executive Briefing Pack would sit alongside the Digital Preservation Handbook and the Digital Preservation Business Case Toolkit, providing easy access to the straightforward points that need to be understood before decisions on preservation policies can be made and implemented.

Within the pack, a ‘grab bag’ of handy ready-made goodies would enable digital preservationists across all sectors and organization types to create a tailor-made document/ presentation/ letter/ campaign (delete as applicable) to persuade whoever needed persuading within their organisation that DIGITAL PRESERVATION IS A GOOD THING!

Australasia Preserves: Establishing a digital preservation community of practice

On February 16 2018, The University of Melbourne Library Digital Scholarship team organised and hosted the inaugural “Australasia Preserves” event. This event brought together 75 people interested in digital preservation in Australia and New Zealand, from a variety of different institutions and organisations.

Our goal was to start to build a community of practice for digital preservation in our region for all interested people and organisations, regardless of institutional affiliation or skill level. We’ve wanted to get a community like this together for a while. Because we are a very small team working on a very big digital preservation project, we have a keen interest in generating greater connections with other digital preservation initiatives, projects, and work being done, and in exploring opportunities for collaboration.

Your sub-committee needs you!

Words by William Kilbride and Sarah Middleton

In the last few months, we’ve seen a fair bit of ‘newness’ at the DPC: a new chair, a new strategic plan and a new structure have all set us on an exciting path for the coming years. And the new structure and strategic plan, in particular, bring with them new opportunities for DPC members to become more involved in what we do and how we do it.

You may recall that the DPC has a number of sub-committees that help oversee our work and keep us focussed on member needs. There are currently four sub-committees covering our six main areas of work. These are:

- Community Engagement: enabling a growing number of agencies and individuals in all sectors and in all countries to participate in a dynamic and mutually supportive digital preservation community.

- Advocacy: campaigning for a political and institutional climate more responsive and better informed about the digital preservation challenge; raising awareness about the new opportunities that resilient digital assets create.

- Workforce Development: providing opportunities for our members to acquire, develop and retain competent and responsive workforces that are ready to address the challenges of digital preservation.

- Capacity Building: supporting and assuring our members in the delivery and maintenance of high quality and sustainable digital preservation services through knowledge exchange, technology watch, research and development.

- Good Practice and Standards: identifying and developing good practice and standards that make digital preservation achievable, supporting efforts to ensure services are tightly matched to shifting requirements.

- Management and Governance: ensuring the DPC is a sustainable, competent organization focussed on member needs, providing a robust and trusted platform for collaboration within and beyond the Coalition.

Preserving the Welsh Record: A bit at a time

Sally McInnes is Chair of the ARCW Digital Preservation Group and Head of Unique Collections and Collections Care at the National Library of Wales

On International Digital Preservation Day last year, the Archives and Records Council Wales (ARCW) published the first national digital preservation policy. The policy was produced in recognition of the significant strategic challenge which digital preservation presents to organisations in Wales which are creating, providing access and preserving digital information. The policy also aims to raise awareness of the importance of effective digital preservation amongst archive institutions, practitioners and managers, through stressing that transparent, responsible and accountable activity relies upon the ability to evidence decision making and to provide a reliable audit trail.

Let's Get Together and Feel Alright.....

The Digital Preservation community is growing. The DPC adding its 74th member this month (we’ve doubled in size over the past 5 years) is a good example of this. But it’s not just about absolute numbers. International Digital Preservation Day showed that the growth is multi-faceted, with geographical and sectoral expansion being the most obvious changes. Sooner or later we’re going to be confronted with a serious test: how can a small community become a big community while still being welcoming? Is it possible that we can learn from other communities? I’ve been thinking about how I can draw from my experiences beyond my work life to help answer these questions.

Sic Transit Gloria Digitalis: The BitList in Beta

I spent most of the latter half of November locked in a life or death battle with Microsoft Excel, arranging, sorting and making sense of entries to the BitList. I seem to have survived this ordeal-by-spreadsheet, even if myriad little boxes containing doubtful formulae dance restlessly through the half-darkness of my closed eyes.

The BitList is a simple enough proposition - a list of digital content and types that are called out for special attention because the digital preservation community has particular concerns over them. It’s a list created by open invitation to the whole digital preservation community and validated by an international expert panel drawn from the DPC membership. The impact is immediate but also, because, the DPC will maintain over the longer term we can move things up and down and in that way we can track progress over the long term too. The BitList therefore means we can identify honest-to-goodness problems and we can celebrate when those problems are resolved.