David S. H. Rosenthal is a retired Chief Scientist for the LOCKSS Program at Stanford Libraries.

Nearly one-third of a trillion Web pages at the Internet Archive is impressive, but in 2014 I reviewed the research into how much of the Web was then being collected and concluded:

Somewhat less than half ... Unfortunately, there are a number of reasons why this simplistic assessment is wildly optimistic.

Costa et al ran surveys in 2010 and 2014 and concluded in 2016:

during the last years there was a significant growth in initiatives and countries hosting these initiatives, volume of data and number of contents preserved. While this indicates that the web archiving community is dedicating a growing effort on preserving digital information, other results presented throughout the paper raise concerns such as the small amount of archived data in comparison with the amount of data that is being published online.

I revisited this topic earlier this year and concluded that we were losing ground rapidly. Why is this? The reason is that collecting the Web is expensive, whether it uses human curators, or large-scale technology, and that Web archives are pathetically under-funded:

The Internet Archive's budget is in the region of $15M/yr, about half of which goes to Web archiving. The budgets of all the other public Web archives might add another $20M/yr. The total worldwide spend on archiving Web content is probably less than $30M/yr, for content that [probably] cost hundreds of billions to create.

My rule of thumb has been that collection takes about half the lifetime cost of digital preservation, preservation about a third, and access about a sixth. So the world may spend only about $15M/yr collecting the Web.

Preserving the Web and other digital content for posterity is primarily an economic problem. With an unlimited budget collection and preservation isn't a problem. The reason we're collecting and preserving less than half the classic Web of quasi-static linked documents is that no-one has the money to do much better. The other half is more difficult and thus more expensive. Collecting and preserving the whole of the classic Web would need the current global Web archiving budget to be roughly tripled, perhaps an additional $50M/yr.

Then there are the much higher costs involved in preserving the much more than half of the dynamic "Web 2.0" we currently miss.

|

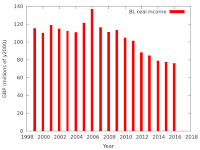

| British Library real income |

If we are to continue to preserve even as much of society's memory as we currently do we face two very difficult choices; either find a lot more money, or radically reduce the cost per site of preservation.

It will be hard to find a lot more money in a world where libraries and archive budgets are decreasing. For example, the graph shows that the British Library's income has declined by 45% in real terms over the last decade.

And, unfortunately, as the demands of advertising increase, the per-site archiving cost also increases. John Berlin at Old Dominion University has a fascinating detailed examination of why CNN.com has been unarchivable since November 1st, 2016:

CNN.com has been unarchivable since 2016-11-01T15:01:31, at least by the common web archiving systems employed by the Internet Archive, archive.is, and webcitation.org. The last known correctly archived page in the Internet Archive's Wayback Machine is 2016-11-01T13:15:40, with all versions since then producing some kind of error (including today's;2017-01-20T09:16:50). This means that the most popular web archives have no record of the time immediately before the presidential election through at least today's presidential inauguration.

The TL;DR is that:

the archival failure is caused by changes CNN made to their CDN; these changes are reflected in the JavaScript used to render the homepage.

The detailed explanation takes about 4400 words and 15 images. The changes CNN made appear intended to improve the efficiency of their publishing platform. From CNN's point of view the benefits of improved efficiency vastly outweigh the costs of being unarchivable (which in any case CNN doesn't see).

Alas, the W3C's mandating of DRM for the Web means that the ingest cost for much of the Web's content will become infinite. It simply won't be legal to ingest it.

Almost all the Web content that encodes society's memory is supported by one or both of two business models: subscription, or advertising. Currently, neither model works well. Web DRM will be perceived as the answer to both. Subscription content, not just video but newspapers and academic journals, will be DRM-ed to force readers to subscribe. Advertisers will insist that the sites they support DRM their content to prevent readers running ad-blockers. DRM-ed content cannot be archived.

So for Internation Digital Preservation Day I will end with a call to action. Please:

- Use the Wayback Machine's Save Page Now facility to preserve pages you think are important.

- Support the work of the Internet Archive by donating money and materials.

- Make sure your national library is preserving your nation's Web presence.

- Push back against any attempt by W3C to extend Web DRM.