Dr Arif Shaon is Senior Digital Curation Specialist at the Qatar National Library.

Efficient long-term digital preservation requires a series of operations to be undertaken throughout the lifecycle of a digital object. The task of preparing content for preservation – i.e., a Submission Information Package (SIP)[1] is a crucial step in the overall workflow as this is where the semantic and structural quality of both data and metadata is set for accessing and using the content in the future. The lower the quality of a SIP, the lower the longevity of the content being preserved.

For large collections of digital objects acquired from external institutions, the task of preparing SIPs can be very challenging. This is especially true where the policies for data and metadata quality of the acquiring organization cannot be easily aligned with those of the donor or contributing organizations.

Qatar National Library[2] has an ongoing initiative[3] for digitally repatriating the nation’s cultural heritage through collaboration with other national libraries (such as the British Library and the French National Library) to identify and digitize archival collections relating to Qatar and the Gulf region. The Library aims to repatriate these contents to Qatar through digitization, archival, and dissemination through its online platforms, such as the Qatar Digital Library (QDL)[4].

Digital preservation is often seen as an important part of the long-term digital curation process for ensuring continued and value-added accessibility and usability of digital objects. The task of preparing SIPs for digital preparation alone can be considered as curation for the efforts required to meticulously ensure the semantic and structural quality of the content before ingestion into a digital preservation system, where it takes the form of an Archival Information Package (AIP)[5]. This process effectively adds value to the underlying content by:

-

ensuring or improving the semantic quality of metadata that typically involves use and/or validation of controlled terms or authority records for information related to people, places, and organizations;

-

ensuring the structural quality of metadata by ensuring validity against the corresponding schema(s);

-

improving the structural longevity of metadata by transforming non-compliance formats, such as metadata as parts of human-readable narratives in Word documents or on the Web into machine-processable formats, such as XML or JSON;

-

reviewing and improving the quality of digitized content, such as compression quality and compliance with relevant FADGI[6] guidelines and standards;

-

capturing pre-ingest events and provenance information as part of the metadata, including the rationale for acquiring the content and information related to any or all validation or quality assurance activities undertaken on the data and/or metadata; and

-

standardizing the overall structure of the directory that encapsulates the data, metadata, and associated digital objects, such as rights and licensing information.

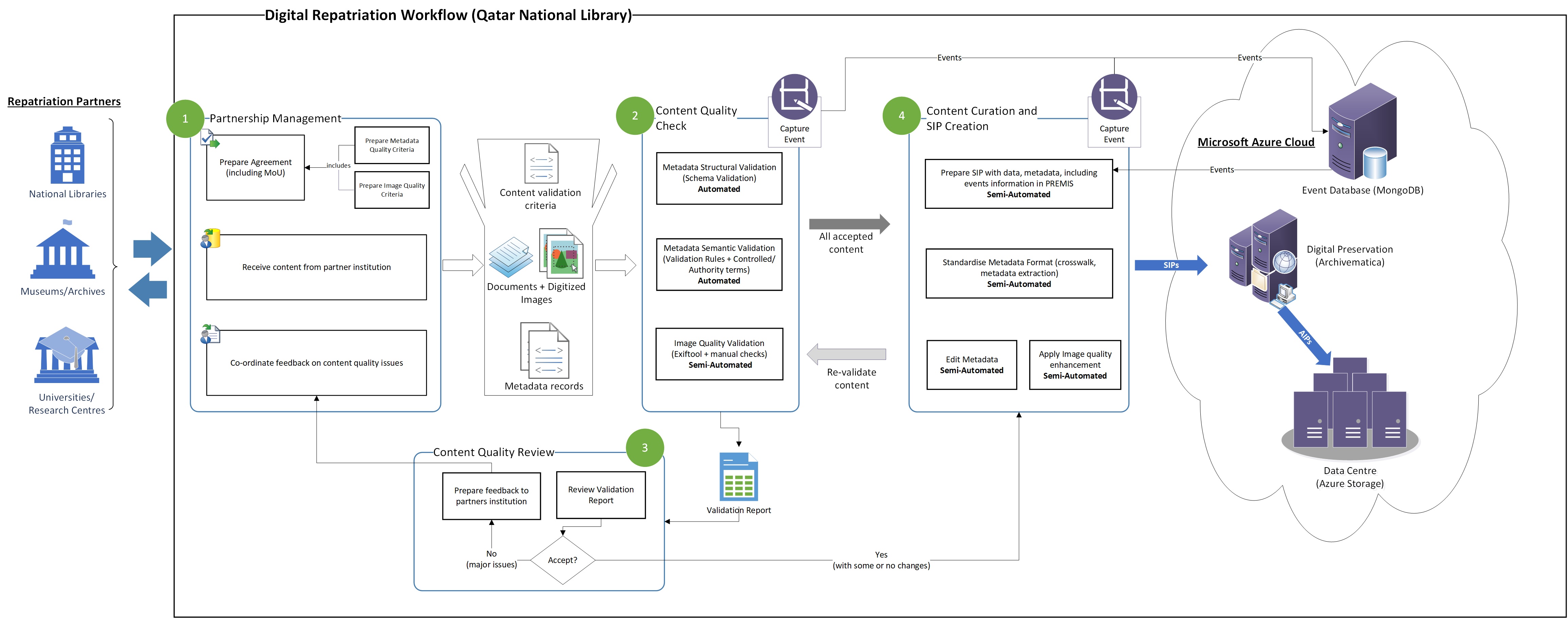

Figure 1: Qatar National Library Digital Repatriation Workflow

As illustrated in Figure 1, curating the content acquired through the large-scale digital repatriation activities of the Library, for digital preservation requires several manual and (semi-)automated processes that are orchestrated in a wider workflow. The effective execution of this workflow requires an institution-level collaboration involving careful coordination of efforts from multiple Library departments. A very crucial step (“Partnership Management” in Figure 1) in the workflow is to mutually agree on a list of technically feasible content quality assurance/validation criteria with the repatriation partner institution. Depending on the level alignment of the related data and metadata policies of the Library and the partner institution, this often involves a significant amount of effort from both parties to come to a mutually agreeable arrangement. For content, which the Library deems of significant value to its repatriation goals and collections, it is often necessary to adjust the Library’s current content quality policy to ensure successful acquisition of the content. For example, it may be necessary to transform or convert data (via file format conversation) or metadata (via crosswalk or mapping) to supported format (s), when the corresponding content of high value (such as rare manuscripts or archival records that no other institutions hold) is acquired from a partner institution’s legacy system that is not directly interoperable with the relevant Library systems. Nevertheless, efforts are made to communicate any content quality issues identified by the validation processes to the partner institution and arrange for re-submission of content, if possible.

To ensure effective maintenance and sustainability of the repatriation workflow, the underlying processes, especially the critical steps, such as the metadata and data validation as well as SIP creation are automated where possible, while enabling manual checks to ensure the overall quality and accuracy of the outcomes. For example, the task of checking the quality of digitized content involves automated checking of the embedded image metadata using a commonly used Open-Source tool, “Exiftool[7]”, followed by a manual check by the Library’s Digitization team to ensure all relevant quality criteria are addressed in a meticulous yet timely manner.

Information about all key processes and their outcomes are captured as PREMIS events[8] which are later included in the SIP for ingestion into the Library’s digital preservation system (based on Archivematica[9] hosted in the Library’s subscription of the Microsoft Azure Cloud). The rationale here, as recommended by the OAIS reference model[10], is to include detailed information about all key events that may have affected a digital object throughout its lifecycle, as part of its post-ingest state (i.e., as AIP) in the Library’s data centre on Azure.

As a new national Library aspiring to position itself as a “knowledge hub” for all things related to Qatari and Middle Eastern cultural heritage through its ambitious digital repatriation initiative, Qatar National Library understands the need for efficient institutional policies and procedures with sound underlying principles for data and metadata quality. While it may sometimes be necessary to adjust parts of the quality assurance procedures for acquiring content of considerable importance and value, strong adherence to the overall policy or at the least underlying quality assurance principles is essential for ensuring the sustainability of the curation workflow that effectively determines the success or otherwise of the digital preservation of the repatriated content. The Library has adopted the ITIL Continual Service Improvement [11] model for all IT-related processes and workflows, including the digital preservation and curation workflows to effectively contribute to its continuous growth and progress as a leading provider of Library services in the region.

[1] 6.2.1 Submission Information Package (SIP) - https://www.iasa-web.org/tc04/submission-information-package-sip

[2] Qatar National Library – https://www.qnl.qa

[3] Digital Preservation to Support Large-Scale Digital Repatriation Initiative of Qatar National Library -https://www.dpconline.org/blog/large-scale-digital-repatriation-qnl

[4] An online archive that covers modern history and culture of the Gulf and wider region – https://www.QDL.qa

[5] 6.3.1 Archival Information Package (AIP) - https://www.iasa-web.org/tc04/archival-information-package-aip

[6] Guidelines - Federal Agencies Digital Guidelines Initiative - http://www.digitizationguidelines.gov/guidelines/

[7] Exiftool by Phil Harvey - https://exiftool.org/

[8] PREMIS Events Controlled Vocabulary - https://www.loc.gov/standards/premis/v3/preservation-events.pdf

[9] Archivematica: open-source digital preservation system - https://www.archivematica.org/en/

[10] OAIS Reference Model (ISO 14721) - http://www.oais.info/

[11] https://www.invensislearning.com/blog/itil-continual-service-improvement/