Angela Beking is Data Policy and Research Analyst/Analyste des politiques et de la recherche en les données at Privy Council Office/Bureau du Conseil privé (Canada).

We often speak of the critical importance of upstream engagement in digital preservation work. If we wait until the end of the records lifecycle to begin speaking with Producers about the opportunities provided by digital preservation, we are often too late. That box of unreadable floppy disks. Those files in a format that is not recognizable, let alone readable. That information that was stored on a social media platform, thinking it would be around forever (looking at you, Google +). All of these examples speak to the need for active and engaged digital preservation practice.

But how do we actually “do” upstream engagement? What value can we deliver to Producers, and what can we learn in return? I suggest that there are lessons to be learned in breaking down barriers through upstream engagement that will encourage – or even require – our discipline to reflect on its core assumptions.

This year, I am enjoying the ultimate opportunity to “break down barriers” as a Data Policy and Research Analyst at Canada’s Privy Council Office (PCO), on secondment from my Digital Archivist position at Library and Archives Canada (LAC). PCO supports the Canadian Prime Minister and Cabinet, helping the government to implement its vision, goals, and decisions in a timely manner. The work is very fast-paced, and requires quick access to reliable data to support effective decision-making.

The Information and Data Management Services team at PCO is working to unlock the value of PCO’s data by first knowing what we have. A fulsome data inventory will allow for cross-sectoral collaboration, engagement, and innovation with different data stores. As I looked over the inventory, my first instinct was to add fields that would allow us to capture information crucial to digital preservation risk assessment. Which of the data stores have enduring value? Where are they stored? In what format are they stored? What is the extent (number of files, number of bytes)?

I quickly learned that data management is, perhaps, the wise older cousin (auntie?) of digital preservation. Many of these questions are already being asked. The inventory investigates everything from data criticality to server location(s) to access restrictions. When we know our data through good data management practice, the barriers to good digital preservation are greatly reduced.

The barrier I did not sufficiently anticipate, however, is that digital preservation assumes that there is (or will be) a preservation object. We need a static “thing” to preserve, whether it be a flattened data set, a Word document, a PDF, a PowerPoint file, or an Excel sheet. Enter data and information that, for want of a better description, never sits still.

Microsoft 365 and similar tools shake the foundations of how information is derived from data because the result is never organically static. The visuals in a Power BI dashboard refresh when, for example, the data provided via connection to Analysis Services updates. The dashboard is by nature an interactive display of information derived from data. If the data changes, so do the insights presented by the dashboard. If a user wants a new or different insight, perhaps by a different visual or graph, the variables can be changed, creating an entirely new set of information, in a few quick clicks. This constant updating is attractive and useful for executives who need to make timely and informed decisions. It makes sense that dashboards built in Power BI will start to replace PowerPoint presentation files, which require manual updating.

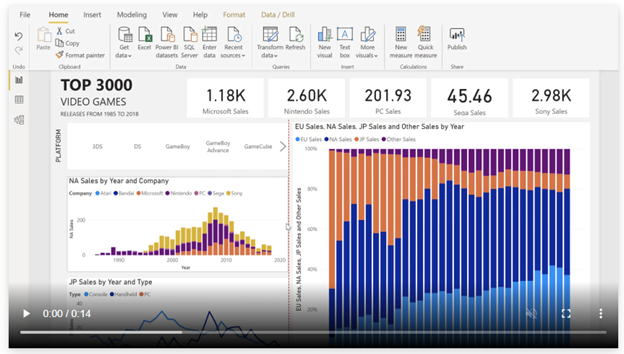

A deceptive illustration. While made static via screenshot, this Power BI dashboard is anything but fixed. Source: https://powerbi.microsoft.com/en-us/desktop/

But what, then, is the “preservation object”? Even if technologically possible, does it make sense for digital preservation professionals to ask Producers for “static” representations of content that was always meant to shift? For example, a Power BI dashboard can be exported to CSV, Excel (.xlsx, .xlsm), or Power BI Desktop (.pbix). But would such an export be an accurate representation of how that data and information was used to drive decision-making? Are there “non-static” ways we could do digital preservation? Is fixity the only way?

Breaking down this barrier involves ongoing, meaningful engagement with Producers. It means grappling with technology and facing difficult questions in order to keep pace with the nature by which we use and consume technology to digest information. I do not have answers to the fundamental question I pose here – what is “fixity” when nothing is “fixed”? – but I do think that the way forward to answering this question is to literally (virtually?) “sit” with data and information Producers. What is their data? How do they derive information of value from that data? What needs to be preserved, from a Producer’s point of view? The raw data? The information that is derived from the raw data? Some sort of representation of the consistently shifting nature of that data and information, as it was used to support decision-making? Discussing these questions will help us move forward. The foundational concept of fixity feels shakier, when one goes to sit in the world of data.

Comments

Dingwall, Glenn, Richard Marciano, Reagan Moore, and Evelyn Peters McLellan. 1. “From Data to Records: Preserving the Geographic Information System of the City of Vancouver”. Archivaria 64 (1), 181-98. https://archivaria.ca/index.php/archivaria/article/view/13157.